|

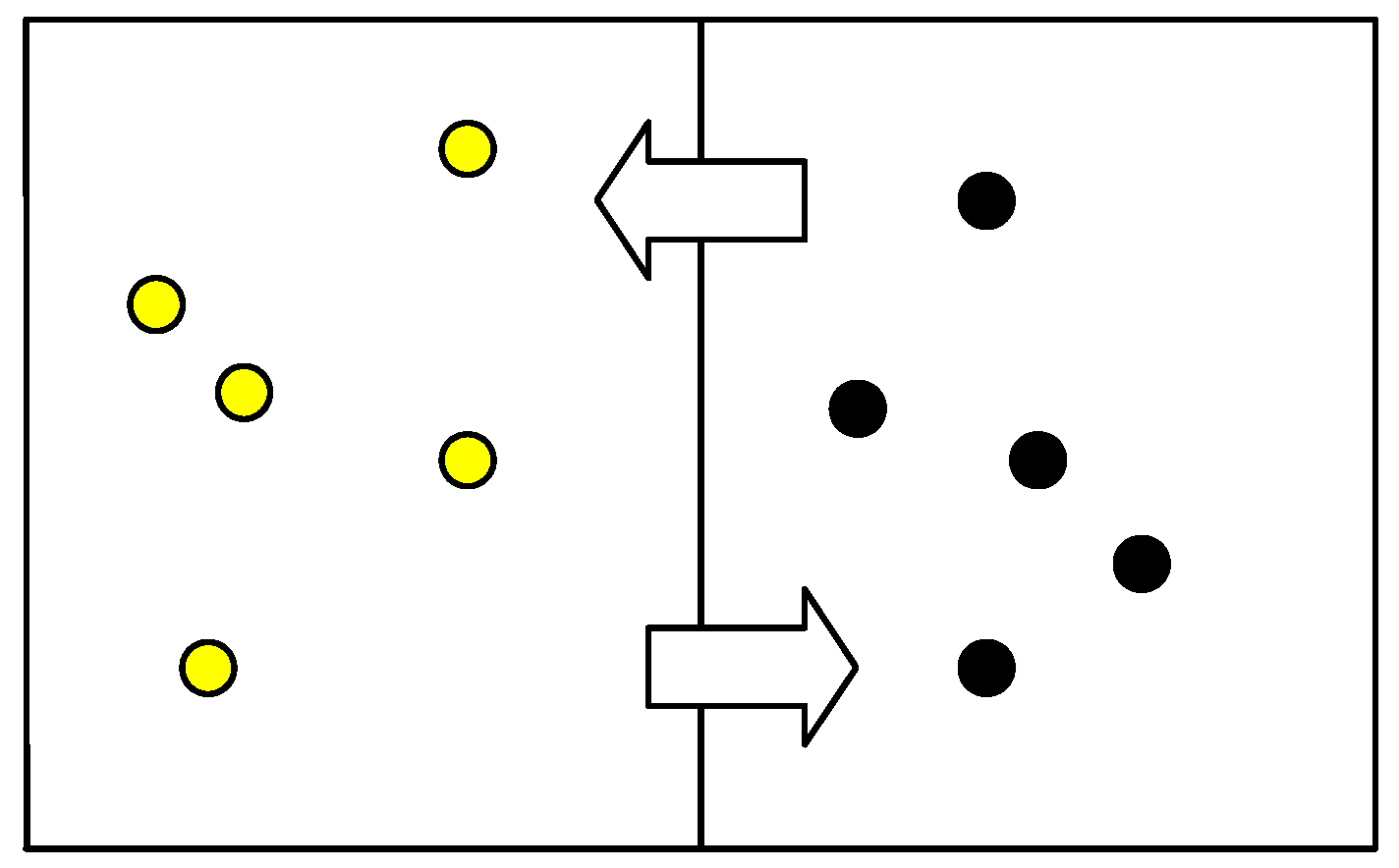

10/2/2023 0 Comments Entropy definition biologyAlthough not immediately obvious, the inaccurate characterization of entropy weighs heavily in current events involving education, especially in national and international debates involving the teaching of evolution. It is perhaps apropos that the concept of entropy has continuously picked up misunderstandings and misinterpretations that have left the concept bloodied, beaten, and unrecognizable. Clarification of the interactions between entropy, the second law of thermodynamics, and evolution has the potential for immediate benefit to both students and teachers. Finally, we discuss the association of these traditionally physics-related concepts to evolution. We provide teachable examples of (correctly defined) entropy that are appropriate for high school or introductory college level courses in biology and evolution. Herein, we review the history of the concept of entropy from its conception by Clausius in 1867 to its more recent application to macroevolutionary theory. Entropy is a metric, a measure of the number of different ways that a set of objects can be arranged. Entropy is not disorder or chaos or complexity or progress towards those states. Thus my advice to you: when someone talks about entropy and it's not a chemical process engineer talking about the thermodynamic efficiency of the industrial chemical process that he is responsible for.Misinterpretations of entropy and conflation with additional misunderstandings of the second law of thermodynamics are ubiquitous among scientists and non-scientists alike and have been used by creationists as the basis of unfounded arguments against evolutionary theory. We haven't even begun to scratch the mathematical surface of these things.

What Newton couldn't know and what is not at all captured by terms like entropy is that there is a world of utterly complex dynamics between integrable mechanical systems and the thermodynamic limit. Physicists knew already at the end of the 19th century that this is not true and mathematically the "trouble" with dynamic systems was already known to Newton who failed to solve the three body problem. Part of the problem, as far as a I can tell, is that much of the discussion about the dynamics of physical (and biological!) systems is still being carried out at the level of the 19th century, when the world seemed divided into (and fully explainable by!) either mechanics or thermodynamics. However, while in physics entropy is extremely well defined in both thermodynamic and statistical mechanics terms, many characterizations in the layman literature seem questionable, at best, and there is quite a bit of overreach about the "meaning" and function of entropy IMHO. If we accept the ergodic hypothesis as "essentially correct" (in a physical, NOT in a strict mathematical sense), then we can recover thermodynamic entropy as a measure of order/disorder, which is definable in terms of counting microstates. While the ergodic hypothesis is quite plausible and physically very well realized for many systems, one can construct trivial cases for which it does not hold (especially for systems that are interaction free like the ideal gas), which should give us some pause with regards to the mathematical problem with ergodicity. This link is given by the unproven and most likely unprovable ergodic hypothesis. One has to understand the link between thermodynamics and statistical mechanics to get a sense of where the second meaning of entropy comes into play. This has, at face value, nothing to do with order and disorder because there is no obvious way to even define structure in thermodynamics. It's the integral of the reversible heat flow divided by the temperature at which the flow occurs.

The definition of entropy can be found on Wikipedia.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed